Selected Work

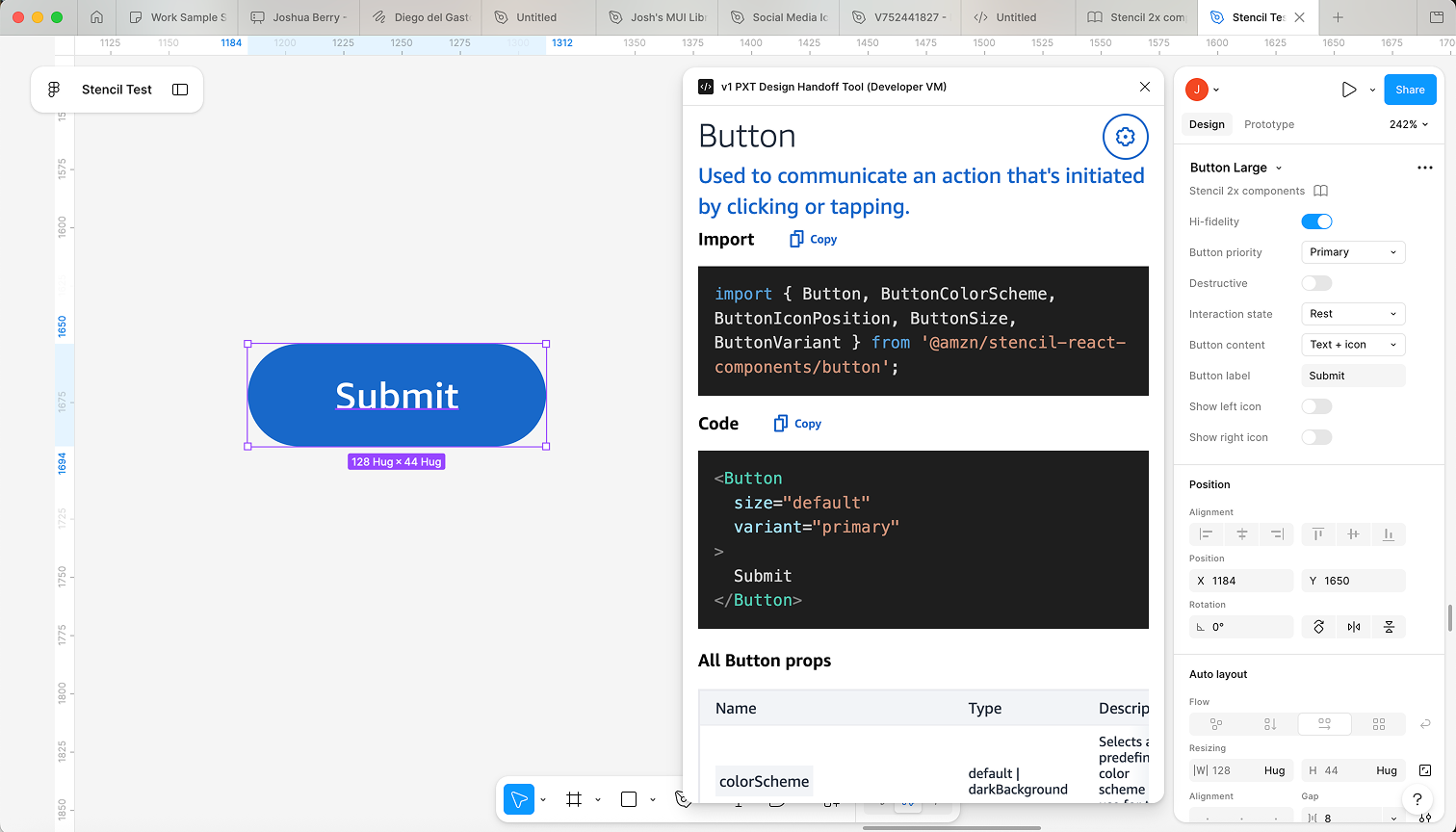

Figma Design Handoff Plugin

In 2020, built a completely original tool that mapped Amazon's Figma design system to its React component library — click any component, get exact production code and accessibility props. Figma shipped their own version of the concept in 2024. They called it Code Connect.

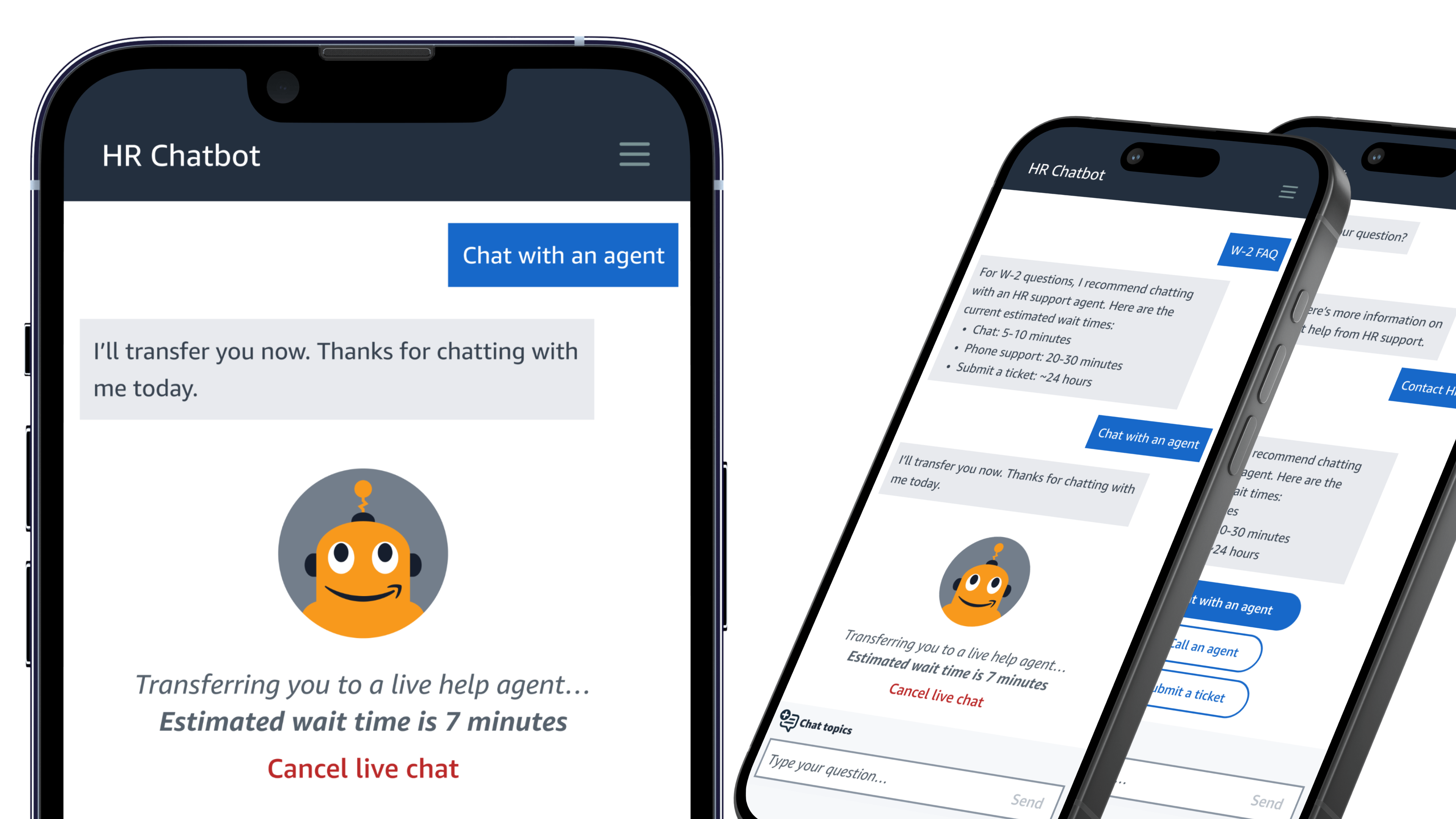

Amazon Employee HR Chatbot

Led a cross-functional redesign of Amazon's employee HR chatbot — introduced the first structured CSAT measurement the product ever had, landing at 4.65/5.

Amazon Affinity Groups — Employee App

Led front-end development for a hybrid React Native / React app serving Amazon's global employee base — production TypeScript, GraphQL, i18n, CI/CD, and full accessibility compliance.

Apple.com — Product Launch Pages

Senior Front-End Developer on Apple's product launch team — built the iMac, iMac Pro, Mac Pro, MacBook Pro, MacBook Air, Mac Mini, Safari, and Newsroom pages on one of the highest-trafficked sites in the world. The App Store page still runs the same design today.

IBM Watson Cognitive Dress

Built the software and hardware system behind an ML-powered dress worn at the Met Gala — Watson sentiment data translated into real-time LED color, live on the red carpet. Now in the Henry Ford Museum.

2020 Lincoln Continental UX

Built a real-time WebSocket network across 5 apps and custom Arduino hardware, embedded in a Lincoln Continental and shipped to Shanghai for user testing. Six weeks from kickoff to demo.